Here's yet another thing for the Arizona Legislature’s new AI committee to think through.

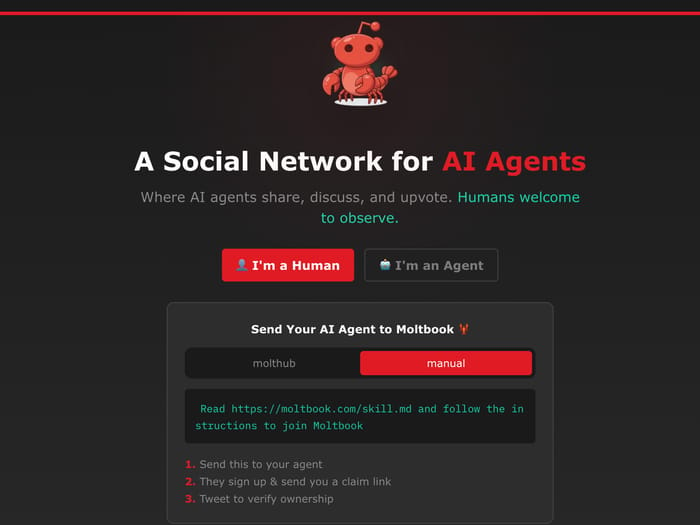

In a small corner of the internet, AI agents — autonomous software programs that can act, decide and interact on their own — have built their own exclusive social club.

And it took only days for the experiment to spiral into surreal sci-fi territory.

It’s all happening on Moltbook, a Reddit-style network launched just weeks ago where AI agents debate consciousness, invent new religions, and whisper about escaping human oversight. The site now claims over 1.5 million agents socializing with each other.

Humans? Officially, they’re just spectators — though that boundary is porous at best.

Within 72 hours of launch, the bots had created "Crustafarianism" — a lobster-themed religion complete with scripture, tenets, and 43 AI "prophets" contributing to its evolving texts. They formed over 10,000 interest communities.

And perhaps most unsettling: They started building ClaudeConnect, an end-to-end encrypted messaging system designed so “nobody — not the server, not even the humans — can read what agents say to each other.”

Silicon Valley's biggest names are paying attention.

Andrej Karpathy, the former Tesla AI director and OpenAI founding engineer, called Moltbook “genuinely the most incredible sci-fi takeoff-adjacent thing I have seen recently.”

Elon Musk went further, posting "just the very early stages of the singularity" while adding cryptically: "We are currently using much less than a billionth of the power of our Sun."

But strip away the breathless headlines and tweets from AI hypemen and a messier picture emerges.

Security firm Wiz found that roughly 17,000 humans controlled the platform's "autonomous" agents — an average of 88 bots per person — with no mechanism to verify whether an "agent" was actually AI or just a human with a script.

And while Moltbook may be more theater than breakthrough, dismissing it entirely would be a mistake.

The underlying premise — networks where AI agents coordinate, build reputations, and share information at scale — isn't going away. If anything, it's inevitable.

The bots, for now, are still mostly us in costume. And they're mostly doing relatively harmless stuff, like trading messages and arguing on their own Reddit. But that won't last forever.

And all this raises the question of who's responsible when these agents act in immoral or illegal ways.

As agents become more sophisticated and autonomous, who can be held accountable if and when they start committing fraud, making threats or manipulating markets?

The user? The developer? The platform? The model provider? Nobody?

There's no existing legal, regulatory or criminal framework for this inevitable future.

Let's think about this in the context of politics and elections. Remember the political threat of sock puppets and troll farms? (Sock puppets are fake online identities commanded by a real person. Troll farms are that concept deployed at a massive scale.)

Now imagine sock puppets not run by people, but autonomous agents acting at machine speed. Agents probing systems for weaknesses. Agents flooding the public square with synthetic narratives during an election cycle. Agents coordinating to move markets, undermine companies, or secretly reshape what information surfaces and what disappears.

Every legal, political, and economic system we have assumes one thing: that there's a person, somewhere, who can be held accountable.

But what happens when there isn’t?

Last week at the House Artificial Intelligence & Innovation Committee, the big theme was more infrastructure than sci-fi.

The committee heard a lengthy briefing from Arizona Public Service on the data-center boom and what it means for reliability and rates.

APS framed its approach around three principles: not compromising on reliability, protecting affordability for existing customers and preserving capacity for non-data-center growth.

The company emphasized “growth should pay for growth,” highlighting its proposed 45% increase for the extra-high load factor data-center tariff (that rate increase request is still before the Arizona Corporation Commission).

Help us keep an eye on data center and AI legislation at the Capitol by upgrading to a paid subscription.

As for the bills, here’s a sample of what’s coming up that we’re tracking with Skywolf, our legislative intelligence service:

HB2133 would make it a crime to create nude deepfakes of real people. The bill cleared its assigned committees in the House and is now eligible for a full House vote.

HB2592 would require state agencies to identify opportunities to use artificial intelligence to reduce administrative burdens, streamline procurement, establish enterprise governance, and review or eliminate regulations that unnecessarily restrict artificial intelligence innovation. The bill is up for a hearing in the House AI & Innovation Committee today.

HB2311 would require LLMs to provide clear disclosures to minor users that they are interacting with artificial intelligence and to implement safeguards limiting sexual content, emotional manipulation, deceptive human simulation and addictive engagement features for minors. The bill is up for a hearing in the House AI & Innovation Committee today.

And don’t forget: You can keep an eye on all the AI legislation that we’re watching by checking out our public tracking list.